The landscape of world models is diverging into two distinct architectural approaches. On one side, we have video generation models scaling up to incredible fidelity. On the other, we have interactive token-based environments that allow agents to step through simulated physics.

The Video Generation Approach

Models like Sora and Cosmos rely on vast quantities of video data. They internalize a latent representation of physics simply by predicting the next frame of a video. While their outputs are visually stunning and photorealistic, they often hallucinate when pushed into interactive scenarios.

Here is a block quote summarizing the primary drawback:

"If the model doesn't explicitly understand what is an object and what is the background—only what pixels come next—it will inherently fail at collision detection during embodiment."

The Interactive Environment Approach

Approaches championed by DeepMind's Genie 3 and Yann LeCun's JEPA architecture forego photorealism for structural logic. In these models, the AI operates in a latent space where actions directly dictate state changes.

In conclusion, as we approach 2030, the true test won't be generating a beautiful video of a robot walking, but allowing a real robot's brain to successfully simulate the next three seconds of its interaction with a complex environment to avoid spilling a cup of coffee.

Ecosystem Landscape

The World Model Wars

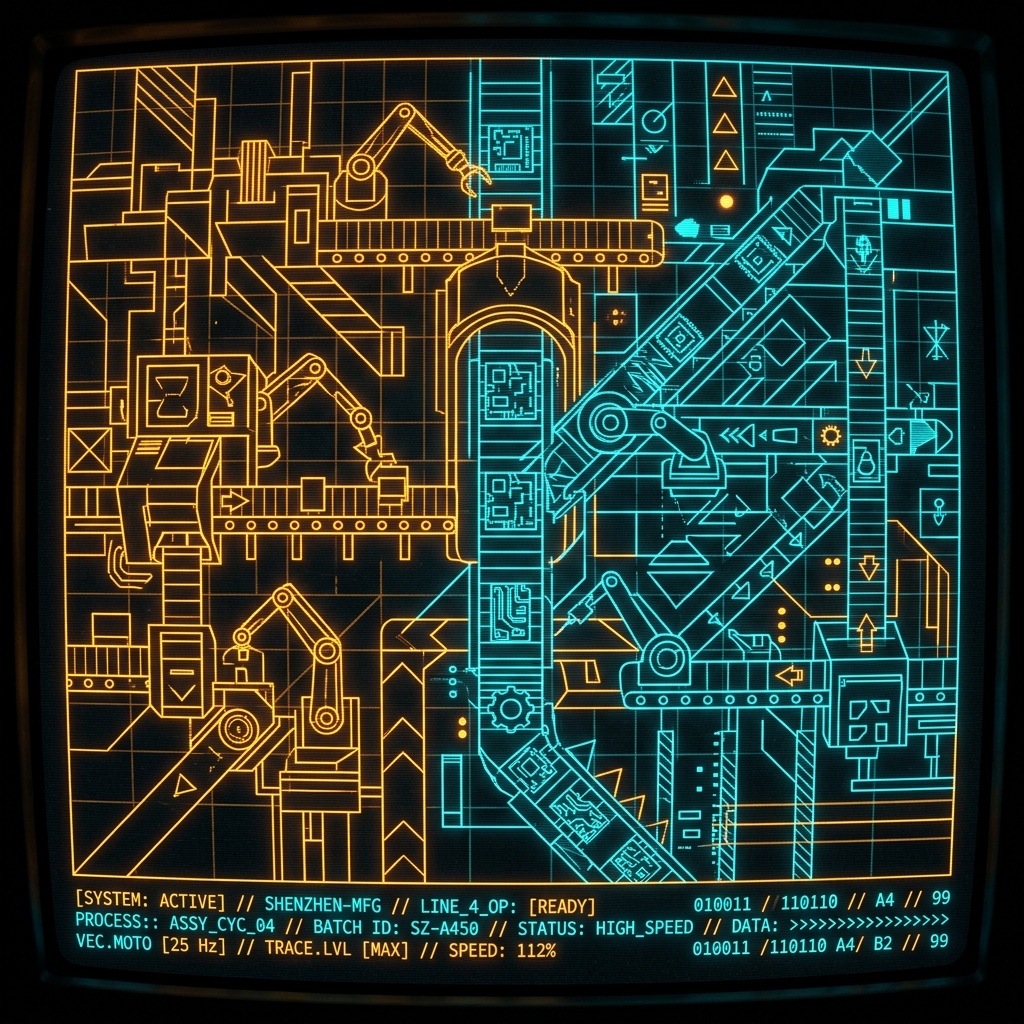

The Core Thesis: Generating photorealistic video (bottom-left) solves the human visual test, but forces physical AI to re-interpret pixel matrices during collisions. Moving toward latent-space interaction (top-right) yields "uglier" visualizations but mathematically perfect causal simulation loops required for embodied training.

Rolando Rabines is the founder of ROBOT WORLD and an investor in Physical AI through CAPAC. An MIT-educated engineer and CFA, his experience includes serving as a DARPA Systems Architect, Co-Founder of Macgregor, and leading Atomera through its IPO.

If you found this analysis useful, subscribe to ROBOT WORLD— and forward it to one colleague who should be reading this.

Disclaimer

The information presented in this article is for informational, educational, and analytical purposes only and does not constitute financial, legal, or investment advice. Do not make investment decisions based on this publication.